Get HTTP Header Info from Web Sites Using curl

The easiest way to get HTTP header information from any website is by using the command line tool curl. The syntax to retrieve a website header goes like this:

curl -I url

That is a capital ‘i’ not a lowercase L, the capital i extracts only the header information.

Try it out yourself with a sample URL, here’s an example syntax string using Google.com as the website header to retrieve:

curl -I www.google.com

Again, it’s important to note that capitalized I if you only want the site header. Using a lowercase i will give you a ton of minified HTML along with the header, just scroll up in the terminal window to the lines directly succeeding the curl command to find the HTTP header information.

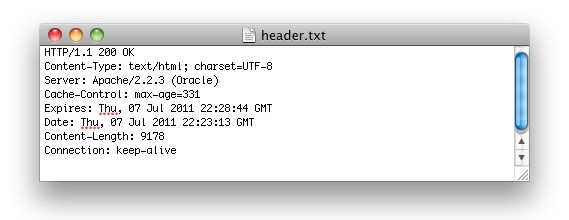

An example of HTTP header details retrieved by curl -I might look something like this:

HTTP/1.1 200 OK

Date: Thu, 07 Jul 2014 22:15:57 GMT

Expires: -1

Cache-Control: private, max-age=0

Content-Type: text/html; charset=ISO-8859-1

Set-Cookie: PREF=ID=741dreb25486514f:FF=0:TM=13154488957:LM=15526957:S=kmFi3jKGDujg; expires=Sat, 06-Jul-2013 22:15:57 GMT; path=/; domain=.google.com

Set-Cookie: NID=48=8jFij8f8Lej115z89237iaa8sdoA8akjak8DybmLHXMC6aNGyxM8DnyNv-

iYjF09QhiCq2MdM3PKJDSFlkJalkaPHAU4JQy7MM8MKDQKEFLPqzoTSBPLKJLKMmdILlkdjel; expires=Fri, 06-Jan-2012 22:15:57 GMT; path=/; domain=.google.com; HttpOnly

Server: gws

X-XSS-Protection: 1; mode=block

Transfer-Encoding: chunked

An easy way to get around all the HTML, Javascript, and CSS nonsense is to use the -D flag to download the header itself into a separate file, and then open that file in your preferred text editor:

curl -iD httpheader.txt www.apple.com && open httpheader.txt

This is the same curl command as before with a few modifiers. The use of the double ampersand tells the command to only open the file if the header was successfully downloaded. Using ‘open‘ will open httpheader.txt in the default GUI text editor, which is generally Text Edit, but you could use vi, nano, or any of your preferred command line tools:

curl -iD httpheader.txt www.apple.com && vi httpheader.txt

curl is a powerful utility that is worth familiarizing yourself with. Anyone involved with the web should get some good use out of the header trick, and web developers can also use curl to copy all the HTML and CSS from a website very quickly. The other advantage to curl is that it’s widely available for virtually every operating system out there, it’s bundled with just about every version of Mac OS X and Linux, and you can also find versions for Windows and even Android and iOS through individualized apps. Because curl has a long history and the commands are universal across platforms, it’s really the ideal choice for pulling header details, and is a valuable tool for systems administrations, network admins, web developers, and many other technical professions.

Update: Updated flags from -i to -I by reader recommendation, thanks everyone!

I use this method in a continuous loop when checking in online for Southwest flights. This allows me to obtain the servers exact time so I know when to hit the “Check-in” button to ensure I get a Group A boarding spot! :)

That sounds like a really interesting strategy, do you just check once for the server time and then schedule your check-in accordingly?

curl -I http://www.google.com

-I/–head

(HTTP/FTP/FILE) Fetch the HTTP-header only! HTTP-servers feature

the command HEAD which this uses to get nothing but the header

of a document. When used on a FTP or FILE file, curl displays

the file size and last modification time only.

Use a capital ‘i’ to display only the headers:

curl -I http://www.google.com

HTTP/1.1 200 OK

Date: Fri, 08 Jul 2011 15:22:39 GMT

Expires: -1

Cache-Control: private, max-age=0

Content-Type: text/html; charset=ISO-8859-1

Set-Cookie: PREF=ID=887fd41683939d85:FF=0:TM=1310138559:LM=1310138559:S=JC01XqwewmutBjaa; expires=Sun, 07-Jul-2013 15:22:39 GMT; path=/; domain=.google.com

Set-Cookie: NID=48=iRxY_rAFt4NCBGeRnAsqWZoxoXd6QuWODyBppeBLvmhYwBXLB2EPH-DyBns5hb4poiH2tz_WekVG0-KZ-QeWMccad3l2E443pEpctCerqrZjmzFvFp1014VANg2cBzV7; expires=Sat, 07-Jan-2012 15:22:39 GMT; path=/; domain=.google.com; HttpOnly

Server: gws

X-XSS-Protection: 1; mode=block

Transfer-Encoding: chunked

Post has been updated, thanks.

Too much text to scroll through? DUDE.

curl -i http://www.google.com | more

Handy for any occasion.

Nice one. I just need the status code, grep for HTTP and you will get that.