How to Create a Tar GZip File from the Command Line

You’re probably familiar with making your own zip files if you’ve ever needed to transfer a group of files or if you’re managing your own backups outside of Time Machine. Using the GUI zip tools are easy and user friendly, but if you want some more advanced options with better compression you can turn to the command line to make a tar and gzip archive. The syntax will be the same in Mac OS X as it is in Linux.

Creating a Tar GZip Archive Bundle

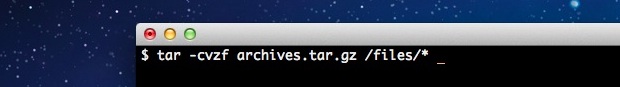

From the command line (/Applications/Terminal/), use the following syntax:

tar -cvzf tarballname.tar.gz itemtocompress

For example, to compress a directories jpg files only, you’d type:

tar -cvzf jpegarchive.tar.gz /path/to/images/*.jpg

The * is a wildcard here, meaning anything with a .jpg extension will be compressed into the jpegarchive.tar.gz file and nothing else.

The resulting .tar.gz file is actually the product of two different things, tar basically just packages a group of files into a single file bundle but doesn’t offer compression on it’s own, thus to compress the tar you’ll want to add the highly effective gzip compression. You can run these as two separate commands if you really want to, but there isn’t much need because the tar command offers the -z flag which lets you automatically gzip the tar file.

![]()

Opening .tar.gz Archives

Unpacking the gz and tar files can be done with applications like Pacifist or Unarchiver (free), or by going back to the command line with:

gunzip filename.tar.gz

Followed by:

tar -xvf filename.tar

Generally you should untar things into a directory, or the present working directory will be the destination which can get messy quick.

Might be nice to include how to use the compression level of gzip. Something like the following in bash:

$ GZIP=-9 tar -czf examplearchive.tar.gz examplearchive/

thanks!

I tried to converd a file by commandline to tar.gz extention, but I get an error: “‘tar’is not recognized as an internal or external command, operable program or batch file.”

Do I need to install a program or do something else before running the command “tar -cvzf jpegarchive.tar.gz /path/to/images/*.jpg”?

Please help!

What OS are you using?? I don’t know if non-standard Linux distros have tar by default yet. I know all the mainstream distros and *cough* mac do but FreeBSD and other non-standard linux distros might not

[…] it just compresses them. If you want to add a group of files to a bzip archive you’d want to use tar beforehand. Some versions of tar even support creating bz2 archives natively with the -j flag, but […]

A .tar, .gz or .tar.gz file also unpacks with the default “Archiver Utility”, at least on 10.7.

That said, I think it’s a good idea to have people use the command line, if only to get a feeling that under the hood there is something else than icons and windows.

I always advise people to use Linux from time to time, rather than just the Mac 100% of the time.

The benefits of using Linux as an alternative is that it forces you to gain useful technical knowledge that also applies to the Mac. It’s like driving stick-shift.

Whereas… using Windows is a waste of time, I find.

Umm, windows has its uses, such as gaming, but yes, linux is like using stick-shift mac. I use linux or windows by preference, since i have no real use for *cough* expensive garbage

No, there is nothing in driving a stick shift that gives you any deep insights to “the art of driving”.

Same goes for unix, it won’t teach you anything useful as such. It’s really, really useful though.

Thanks for writing this. Most of us have a hard time remembering stuff like this.

I forget things like this so it’s nice to have the refresher, sometimes we have our heads so thick in the sands of iOS and OS X that we forget the core of both operating systems is BSD unix.

Old school knowledge, refreshed for the new generation of UNIX / Mac users. Thanks for writing it all out. Top notch.

Seriously, who the hell doesn’t know how to create a tarball from the command line?

Yikes, have the script kiddies taken over or something?!

I have no trouble creating the .tgz; The trouble for me is creating the tarball in a separate directory without removing the original, and a checksum would be nice too, I just want to make sure everything went ok before I remove the files I want to send. Google has indexed and linked me here and though interesting I still think I should consult the man page because I just started picturing doing something with the pipe operator and I am confused on how James /\ sent anything like that without creating a namedpipe, Just baffled right now, and my e2fsck and fsck repair today, brought everything back that I can remember and it seemed more organized all numbered in the lost+found because I am sure I destroyed the file table but seeing as I know the physical layout of my flash, it wasn’t too difficult, now in this sleepy state I say personal buttler tgz directly to another drive without removing the originals or trying to make a local copy first because space is scarce.. Or it would be done already.. and now I just inspired to create my first named pipe for mostly just the fun of it and hey why not I just did my first fsck recovery.. and it was easy.. and I didn’t know ncat could do that.. that tool is handy.. Thanks for the read guyz.

TBH, i cant remember the command, so this article is my goto when i need to tar and gzip something

You really want a mind bender, how’s this for fun:

Had to copy hundreds of thousands of small files from a Linux server to a Mac over the LAN. A normal cp operation is slow because it never gets a chance to increase the network bandwidth as it resets for each individual file. Watching a performance graph would show it peaking and falling for every individual file. So the solution would be to tar gzip the files into one huge file and then copy that file. Trouble was, there was not enough free disk space to handle the huge file.

Netcat to the rescue! Netcat is a very powerful tool it can open a raw network port and pass data through that port and it can listen for a raw data stream on the other end. So the trick is to tar gzip the files and pipe the output (no filename) into nc directing nc to a network port number of choice. Then on the receiving computer you listen with nc and pipe it’s data to untar and ungzip the files on the receiving end.

To give you an idea of how effective something like that is; the attempted copy operation over an SMB share ran for 5 hours and the Finder was still just calculating the number of files which at that point was well over 500,000 files! i.e. nothing had actually started to be copied! I tried using the cp command from terminal and it was only about a third of the way through the copy process having run overnight. I aborted the copy process and setup nc to listen on a particular port and piped it’s output to a tar gz command that would untar and ungzip the files to the required path. Then on the sending system I created a tar gzip command and piped it’s output to nc directing to the IP address of the receiving computer and the same network port. The copy operation finished in 2 hours and looking at the network activity the Gigabit Ethernet adapter was almost completely saturated at 100%. The performance graph rapidly peaked and stayed completely peaked the entire time the copy process ran.

So essentially, the sending system executed tar and gzip operations storing the data in RAM while it pushed that data over netcat to a waiting netcat listening on a pre-determined network port where the files were pushed to a tar gzip command that decompressed the files. Since there was a large continuous amount of data pumping through the network port, the amount of bandwidth increased exponentially until it maxed out the Ethernet card. This allowed it to go as fast as it possibly could have run.

The mind blowing part? These tools are built in to OS X and every Unix system but they have been around for more than 20 years. The whole Unix concept is small tools that do one thing very well and you connect the tools to one another via the pipe command.

The funny part? I’ve been around the block for years and Windows cannot do this at all. I don’t even know of a single third party application that comes close to the way I was able to use tar gzip and netcat to speed up copying an enormous amount of data. But I can boot a Windows box with a Linux USB flash drive and do it!

Let’s have a few more articles about interesting things you can do with the Unix pipe and some little known command line programs!

Disclaimer: The sending system was Linux running a newer GNU version of netcat while OS X includes the older FreeBSD version. I had to use homebrew to install the GNU version of netcat on OS X to get it to work with the Linux system. The command parameters vary a bit between the two netcat programs and I was unable to get the built-in OS X version of netcat to play nice with the Linux GNU version.

Homebrew is a way to install Linux tools on OS X it’s really simple and easy. http://mxcl.github.com/homebrew/ – for example OS X includes the curl command but maybe you want the wget command which is similar but maybe you are more familiar with wget. Homebrew installs the add-on tools into a location that won’t interfere with the normal OS X tools and if their names are the same, it will use the Homebrew one first. Homebrew will not mess up your Mac, but it might confuse someone else trying to work on your Mac.

You can use the xzvf switch when untarring to avoid needing to use unzip (or gzip).

e.g.

tar xzvf myTarFile.tar.gz

also you may find tarred/gzip files called .tgz

The file extension doesn’t actually matter on these, but your readers should know this lil’ factoid.